University of Southern California

GameplayQA

A Benchmarking Framework for Decision-Dense

POV-Synced Multi-Video Understanding

of 3D Virtual Agents

Towards Multi-Agent Video Understanding

Why synchronized multi-viewpoint reasoning matters

Many real-world tasks demand reasoning across multiple synchronized viewpoints simultaneously, where Esports and 3D gameplay offer a uniquely controlled testbed for these challenges.

Robot & Autonomous Fleets

Self-driving cars, delivery robots, and surveillance drones share egocentric camera streams to coordinate lane changes, avoid hazards, and plan collectively across agents that each see only part of the scene.

Human Teams

Law enforcement officers wearing bodycams capture the same incident from different angles, requiring cross-pov referencing feeds to reconstruct a coherent timeline.

Sports & Esports Analytics

Broadcast cameras and player POV feeds must be fused to understand formations, predict plays, and generate highlight commentary across synchronized viewpoints.

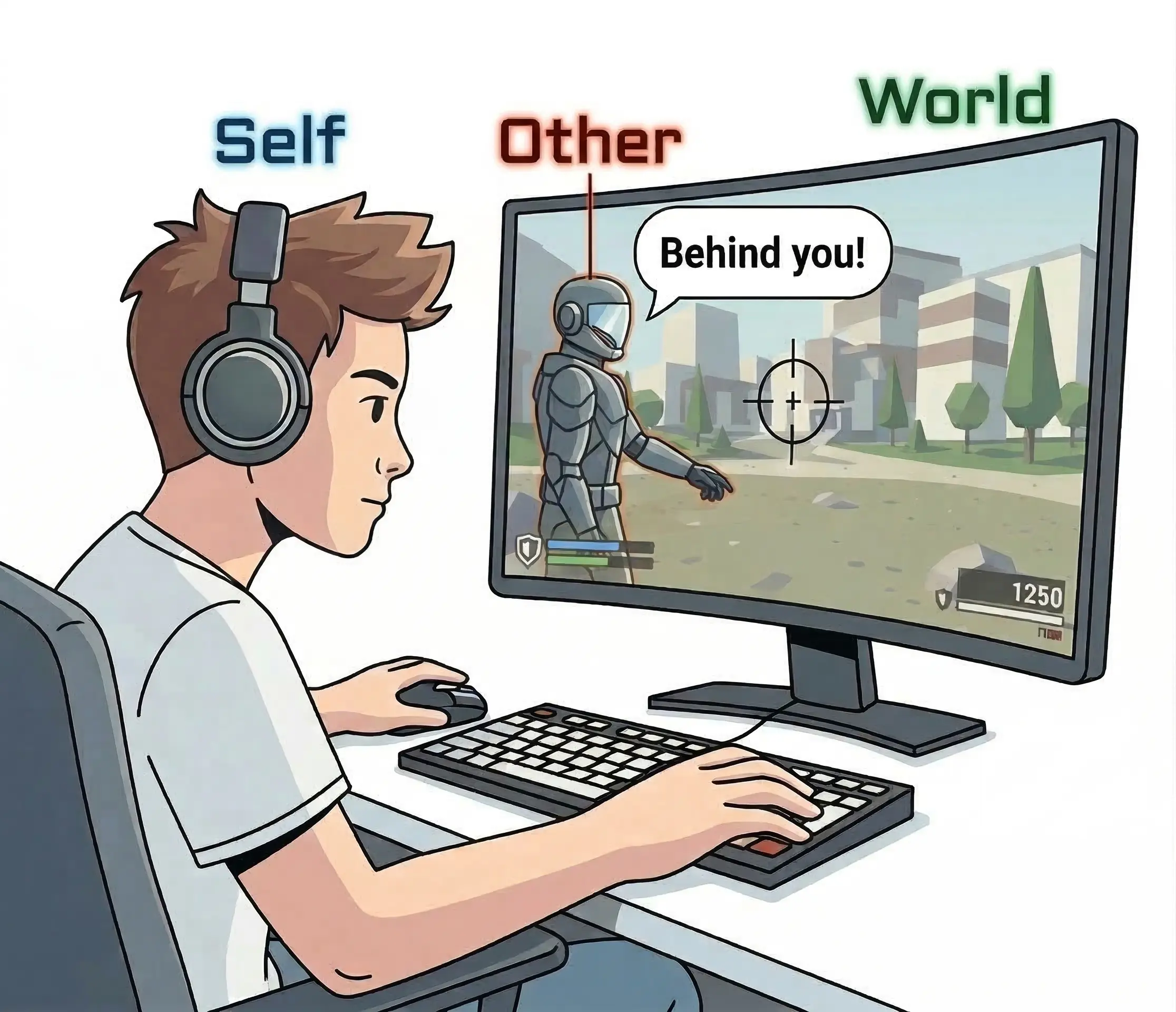

Reasoning Taxonomy

We organize perception around a triadic Self–Other–World entity decomposition. For each entity, we distinguish dynamic and static properties—Action vs. State for agents, Object vs. Event for the world—yielding six primitive label types.

These primitives compose into 15 task categories across three entity perspectives:

- S Self — questions about the agent’s own actions, states, and decisions as seen from its first-person POV.

- O Other — questions about the behavior, intent, and actions of other agents observed in the gameplay footage.

- W World — questions about environmental objects, events, and state changes in the 3D game world.

Annotation Software

We developed a custom multi-track timeline annotation tool purpose-built for dense, synchronized multi-POV gameplay captioning.

Example Questions

Browse diagnostic QA pairs across cognitive levels. Each slide pairs a gameplay video clip with its corresponding question.

Leaderboard

Model performance across task categories. Click any column header to sort. = best, = second best.

| Model | All | ActRec | StaRec | ObjRec | EvtRec | SOC | X-Ent | TsRef | TimLoc | AbsRec | OccCnt | Order | Intent | SyncRef | X-VOrd | POV-ID |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| L1 · Single Reference | L2 · Temporal | L3 · Cross-Video | ||||||||||||||

Error Source Analysis

Fine-grained error analysis across four dimensions reveals that models fail primarily on temporal and cross-video grounding rather than scene-level perception, and that game pace, video length, and number of synchronized perspectives all compound errors.

Error Rate by Distractor Type

Cross-video and temporal distractors cause the most errors

Error Rate by Game

Fast-paced shooters are the hardest

Error Rate by Video Duration

Error increases with video length

Error Rate by Number of Videos

Error scales with number of synchronized videos

Language Prior and Temporal Ablation

To disentangle visual grounding from temporal reasoning, we evaluate GPT-5 Mini under degraded input conditions: no video, a single random frame, and shuffled frames.

| Condition | All | L1 | L2 | L3 |

|---|---|---|---|---|

| Baseline (Full Video) | 62.7 | 67.2 | 61.9 | 60.6 |

| No Video | 29.4 | 36.0 | 29.1 | 24.2 |

| Random Frame | 41.7 | 52.9 | 40.9 | 33.7 |

| Shuffled Frames | 54.8 | 63.1 | 52.6 | 53.4 |

−33.3

No Video vs. Baseline

Language priors alone are insufficient

−21.0

Random Frame vs. Baseline

Static content helps but can’t replace temporal dynamics

−7.9

Shuffled Frames vs. Baseline

Temporal ordering is critical for reasoning tasks

Team

Citation

@article{wang2026gameplayqa,

title={GameplayQA: A Benchmarking Framework for Decision-Dense

POV-Synced Multi-Video Understanding of 3D Virtual Agents},

author={Wang, Yunzhe and Xu, Runhui and Zheng, Kexin and

Zhang, Tianyi and Kogundi, Jayavibhav Niranjan and

Hans, Soham and Ustun, Volkan},

year={2026}

}